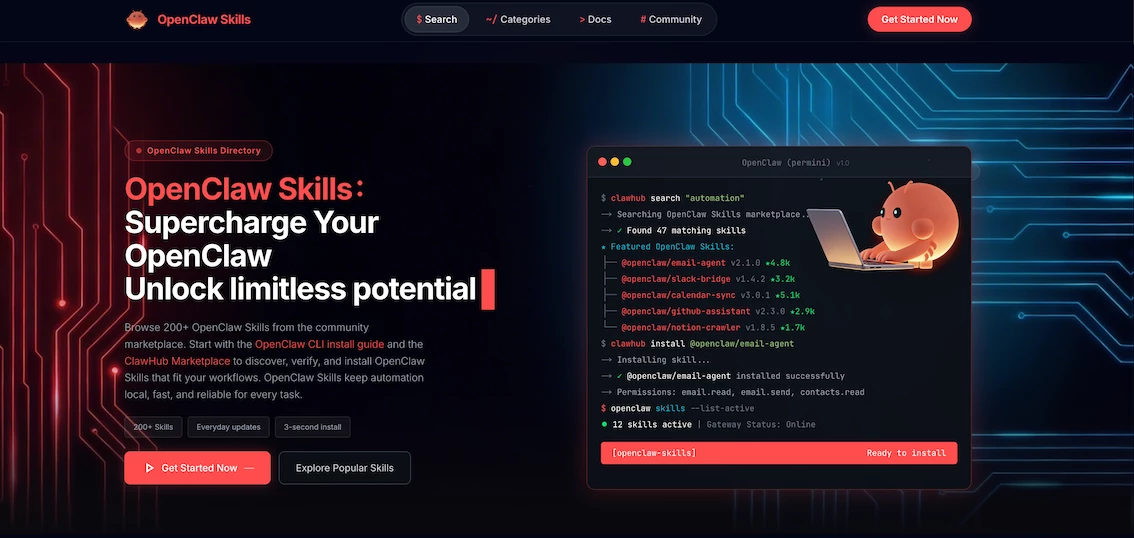

I built the OpenClaw skills directory because I kept seeing the same pattern: people were overwhelmed by lists of tools that looked impressive but were brittle in real workflows. Search for best OpenClaw skills and you get a pile of names. What you do not get is the one thing a user actually needs: confidence that a skill will run, stay stable, and not put their system at risk.

This post is not a theoretical take. It is the standard I use as the person who has to decide what deserves the label best. It is a first-person, field-tested framework that turns a subjective label into a repeatable evaluation. Use it to build your own list, to vet a new skill before you adopt it, or to explain why a flashy skill is not actually worth trusting.

What people really mean by best

When users type best openclaw skills into a search bar, they are not asking for the largest list. They are asking for less regret. They want to skip the two hours of trial and error, and they want to avoid a workflow that breaks three weeks later. In practice, best means three things:

- The skill is easy to run the first time.

- The skill stays reliable after the novelty wears off.

- The skill does not create hidden risk.

Everything else is decoration. If a skill checks those three boxes, it is a candidate for best. If it does not, it is not.

The four-layer standard I use

I score every candidate skill across four layers. If a skill fails any layer, it drops from the best list. If it passes all four, it earns a spot. This keeps the process honest and repeatable.

Layer 1: Spec clarity and structural integrity

OpenClaw skills are structured. The center of gravity is SKILL.md. If a skill does not clearly describe what it does, how to run it, and what it needs, it fails at the most basic level. Spec clarity means a user can understand the input, the output, the requirements, and the boundaries. The structure is not fluff. It is the first trust signal. If you cannot trust the structure, you cannot trust the workflow. The official OpenClaw skills documentation makes this explicit. A skill is a package with a clear structure and expected behavior, and SKILL.md is the contract. If the contract is vague, the skill is not best. https://docs.openclaw.ai/tools/skills

This layer also includes the right metadata. A user should know what will happen before they run it. I downgrade any skill that hides key requirements or glosses over permissions.

Layer 2: Time to first success

A skill should deliver a real outcome in minutes, not in hours. When I evaluate a skill, I ask one question: can a new user run a minimal workflow and get a tangible result within five minutes. This is not a harsh requirement. It is the only way to ensure adoption is realistic. If a skill requires a dozen configuration steps and still does not produce a clear output, it does not deserve the best label.

When testing, I look for a minimal task that mirrors the promise. If the skill claims to organize files, I expect it to sort a sample folder quickly. If it claims to generate a weekly summary, I expect to see a first draft from a short input set. The gap between promise and outcome is where trust is lost.

Layer 3: Maintenance signal and operational resilience

Best is not just about today. It is about next month. A skill with great functionality but no maintenance signal is a liability. I look for updates, issue resolution, and version history. This is where ClawHub helps: when skills are registered and versioned, you can observe if a project is alive or frozen. https://docs.openclaw.ai/tools/clawhub

Maintenance signal is not just release frequency. It is clarity. Does the maintainer document breaking changes. Do they explain what changed. Do they respond to user reports. A skill that stays stable across small updates is more valuable than a skill that ships new features every week but breaks old workflows.

Layer 4: Risk and permissions

A skill that performs well but demands broad permissions is not best, no matter how attractive the feature list. I evaluate skills through the principle of least privilege. The NIST glossary defines least privilege as granting only the minimum permissions required for the task. This is not a theoretical security policy. It is a practical filter. If a skill requests more access than it needs, it loses trust immediately. https://csrc.nist.gov/glossary/term/least_privilege

I also cross-check for common security failure patterns. The OWASP Top Ten exists for a reason: it is a consensus view of the most critical web risks. When a skill touches authentication, file access, or external services, I use OWASP as a quick sanity checklist. https://owasp.org/Top10/2025/

Risk also includes supply chain visibility. If a skill depends on opaque binaries or unexplained third-party downloads, I downgrade it. CISA guidance on software supply chain security reinforces the importance of transparency and verification across the chain. https://www.cisa.gov/resources-tools/resources/securing-software-supply-chain-recommended-practices-guide-customers-and

The scoring rubric I actually use

To keep decisions consistent, I use a simple rubric. Each layer is scored 1 to 5. A skill must score at least 4 in all four layers to enter the best list. If it scores a 3 in any layer, it can be listed as usable but not recommended.

- 5: Clear, reliable, documented, low risk.

- 4: Strong overall, minor gaps but no critical issues.

- 3: Usable but inconsistent or unclear.

- 2: Risky, incomplete, or hard to run.

- 1: Broken, misleading, or unsafe.

This forces discipline. It also makes it easier to explain to the community why a skill is in or out.

How I operationalize the standard on a directory site

A standard is useless if it stays in a spreadsheet. I push these signals into the site experience so users can make better choices faster. That means:

- Clear labels that distinguish recommended from experimental.

- Permission badges that warn about higher-risk access.

- A short quick-start block that proves time to first success.

- A visible update timeline so users can see maintenance signal.

The directory is not a trophy case. It is a decision engine. If a user needs to click through five sections to understand whether a skill is safe, I have failed.

A real example: the high-star skill I downgraded

One skill looked perfect on paper. It had stars, documentation, and a strong feature list. But the install flow required a pasted command that pulled dependencies from a mirror without explanation. The output worked, but the risk profile did not. I downgraded it from best to cautionary. Within two weeks, I received three emails from users who were grateful that the risk was made explicit. The lesson was simple: popularity is not a substitute for trust.

Another real example: the quiet skill that became best

Another skill had low visibility but impeccable structure. Its SKILL.md was clear, the onboarding path took three minutes, and it had a clean change log. It did not look flashy. It did not trend. But it never failed in testing. That skill is now on my top list, because best is about reliability more than marketing.

How I test a skill in practice

I use a simple three-pass test to avoid overthinking. Pass one is a cold start. I assume I know nothing and follow the instructions exactly as written. If the path is unclear or the outcome is vague, the skill fails. Pass two is a constrained scenario. I use a small, real dataset or a tiny folder and I check whether the output is accurate. This is where I look for off-by-one errors, missing edge cases, or settings that are not documented. Pass three is a resilience test. I deliberately introduce a small error, like an empty input or a permission that is missing, and I see how the skill behaves. If it fails silently, I downgrade it. If it produces a clear message, it stays in the running.

The goal of this test is not to punish a skill. The goal is to predict how a new user will experience it on day one. A best skill is not a perfect piece of software. It is a skill that handles real conditions without surprising people.

The role of user feedback in the best list

A directory is only as good as its feedback loop. I track support tickets and short comments because they reveal the gap between spec and reality. If a skill repeatedly generates confusion, it does not matter how clean the code is. I either downgrade it or I add a clear warning. When the same skill earns positive notes like “worked on the first try” or “instructions were clear,” I treat that as a strong signal. In a list of best OpenClaw skills, user feedback is the real tie breaker.

How I explain tradeoffs on the page

A best list still needs nuance. Some skills are fast but fragile. Others are slow to start but extremely reliable. I make those tradeoffs visible on the page. I do not bury them in footnotes. I add short, honest notes such as “fast to run, but requires a paid API” or “stable but has a steep setup curve.” That transparency prevents the worst kind of disappointment: a user who feels tricked. If a user can see a tradeoff and still chooses the skill, they are far more likely to stick with it.

The review cadence that keeps the list honest

I review best candidates on a fixed cadence. Every month I re-check permissions and run a minimal task. Every quarter I review maintenance signal and scan for breaking changes. This keeps the list accurate as the ecosystem evolves. Without cadence, a best list becomes outdated marketing.

The checklist you can reuse

If you are building your own best list, use this checklist:

- Does SKILL.md clearly explain purpose, inputs, outputs, and dependencies.

- Can a new user complete a minimal task in under five minutes.

- Is there evidence of maintenance, updates, or issue resolution.

- Does the skill request only the permissions it needs.

- Can you explain the workflow to a non-expert without hand-waving.

If the answer is yes to all five, the skill is a strong candidate. If not, it is not best yet.

What best really means

Best is a promise. It tells a user that their time and their system are respected. It is not a number. It is not a trend. It is a commitment to reliability, clarity, and safety. When you apply this standard, you end up with fewer skills, but you end up with more trust.

Reference Sources

- NIST Glossary: Least Privilege https://csrc.nist.gov/glossary/term/least_privilege

- OWASP Top 10:2025 https://owasp.org/Top10/2025/

- CISA Software Supply Chain Guidance https://www.cisa.gov/resources-tools/resources/securing-software-supply-chain-recommended-practices-guide-customers-and